AROBS Transilvania – custom software development company

AROBS Transilvania Software development > #WritersOfAROBS > Information system for scene perception and object detection in autonomous vehicles

Information system for scene perception and object detection in autonomous vehicles

Technology is constantly transforming the world around us, and autonomous vehicles are one of the most important technological advancements, as they have the power to significantly change transportation and driver behavior.

At AROBS, we encourage our specialists to share knowledge and offer valuable insights from their software development projects and experience. Read below the article written by Victor P. on how he developed an information system for scene perception and object detection in autonomous vehicles. Victor is a talented software developer from AROBS Software Chișinău, with significant contribution in our software engineering services.

“The important thing is not to stop questioning,” Albert Einstein.

Autonomous vehicles have seen considerable development in the last decade, mainly due to the development of machine vision and artificial intelligence. The increased interest is due to the possibility of increasing driving comfort and reducing the number of road accidents. To implement these technical functionalities, autonomous vehicles use sensors such as lidars and cameras to perceive their surroundings. Autonomous Vehicles can detect objects such as pedestrians, cars, traffic signs, lanes, car speed, and movement trajectory and make real-time decisions to avoid collisions.

Good motivation is the key to success in any project

My motivation to study and implement this project was:

- One of the fastest-growing branches of the automotive industry and research in the field of embedded control systems.

- The prospect of a new technological and intellectual leap for all humankind.

- Development of intelligent automatic visual recognition systems, processing and extracting useful information.

- The study of medicine, neural networks, optics, and programming.

- Development of intelligent computer vision systems based on this knowledge.

- Further use of acquired skills and knowledge in everyday work.

The main objective of this research

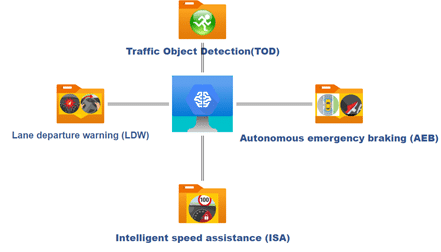

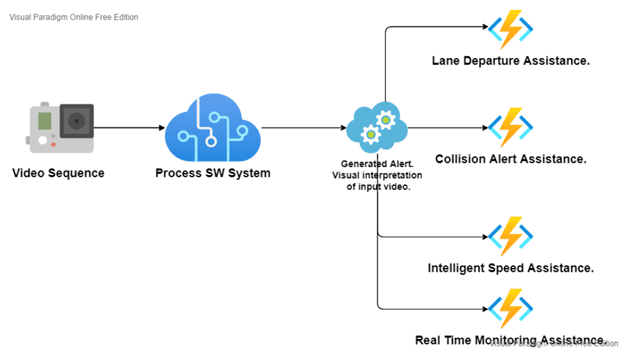

This research aims to develop a system that will process the input data and generates alert signals to ensure the vehicle’s and passengers’ safety, preventing possible collisions with other participants in road traffic. This system’s primary purpose is to assist the driver by continuously monitoring the situation during traffic by generating visual signals. This project has a research character on the possibilities of Computer Vision and Deep Learning technologies for further usage in autonomous vehicles.

To develop a system that will have the following functional characteristics:

- The system processes the input data and generates alert signals based on this data.

- To assist the driver by continuously monitoring the situation during traffic.

- Collection and categorization of additional information extracted from the image about the situation on the road, such as:

- The number of cars in the opposite lane;

- The number of people who are in front of the vehicle;

- Determination of the period of the day (evening, night).

What about the international standards for Autonomous Vehicles?

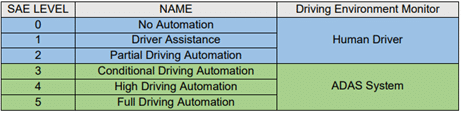

In the modern world, autonomous vehicles are classified into six levels according to the SAE J3016 standard, developed by the SAE International association.

The SAE J3016 standard defines six levels of driving automation, from the SAE Zero level (without automation) to SAE level 5 (complete autonomy of the car). The system developed following this study will correspond to SAE level 1 (driver assistance). This implies monitoring the road situation in real-time by the system and generating visual signals for the driver in dangerous situations.

Literature Review

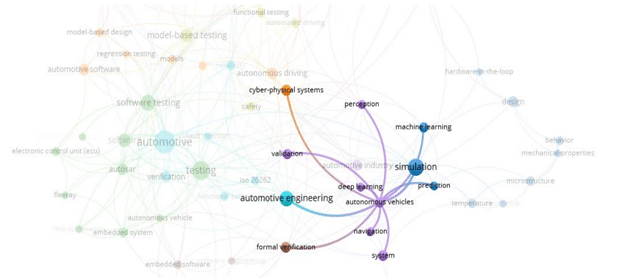

Experience is essential in developing new solutions. Studying the experience already gained by other researchers or teams improves system behavior. As a result, it is possible to propose new solutions or improve the existing ones. I studied a series of scientific papers on digital image processing, processing, and interpreting road traffic information, the use of neural networks in the field of autonomous driving, image segmentation methods, various line detection algorithms, traffic lights, cars, and so on, the importance of standardizing autonomous driving systems. Studying all these topics offered the possibility to create a new approach described and implemented in this paper. Reviewing the existing paper is very important because it makes it possible to create a current vision in the field of perception technologies for autonomous driving cars. It is presented article’s map of Autonomous Vehicles and derivatives topics in Figure 3. Analyzing this map, we conclude that a significant branch of articles related to autonomous vehicles described the perception of a scene in road traffic. Perception systems are one of the essential sub-areas of autonomous driving systems. They can acquire and generate some decisions based on road traffic processing information.

First, it needs to consider that Autonomous Driving is a term that describes the Systems used in Automotive Field. This system allows the possibility to control the car’s movement without interaction with humans. As a result, these systems are very complex. They involve many subsystems, logical and physical layers, and different kinds of information acquisition, decision, and embedded systems. It uses sensors, actuators, machine learning systems, and complex and powerful algorithms to execute software and travel between destinations without a human operator. “The sensors gather real–time data of the surrounding environment including geographical coordinates, speed and direction of the car, its acceleration and the obstacles which the vehicle can encounter.”

Now two significant technical challenges are present: perception and decision-making. The company uses machine learning algorithms to surround problems solved by using deep networks. As a result, this caused a significant change in the testing of these systems but offered major possibilities to the machine vision system for detecting and perceiving a scene. As a rule, supervised learning techniques are used to train these neural networks. Deep learning approaches for intelligent computer vision perception systems consist of three major building blocks: data preparation, model generation, and model deployment.

The first stage, data preparation, focuses on preparing data for further training and testing neuronal networks. This stage requires a large amount of data, such as images of situations on the road, persons, cars, etc.

The model generation stage involves the development of network architecture.

The last stage is model deployment; in this stage, some tests are provided on neuronal networks. To have a functional deep-learning trained model, it is necessary to have a dataset that can cover 100 percent of real-world scenes. It is impossible now because such a dataset will have a thousand gigabytes. Another problem is that real traffic situations can contain some anomalies. The main question is how to teach intelligent computer vision systems to detect and generate some decisions according to them.

Modern companies study these problems. The most relevant answer to this question is to use synthetic data and simulation environments, which can simulate and test in conditions close to reality.

The growing interest in autonomous cars has also led to the developing of several testing platforms, such as SimLab. SimLab was set up at fkaSV (a subsidiary of German company fka) and provides access to new start-ups for autonomous car technologies to test their solutions that include algorithms for autonomous cars, behavioral testing, application testing, and communication testing. Testing and validating trained models and developed systems require innovative testing environments and techniques. Knowing and testing the vehicles under different traffic and environmental conditions is necessary.

The development of autonomous driving car systems in recent years has generated several problems in international law and the regulation of autonomous driving car behavior, leading to the creation of an international standard for car automation called SAE J3016. During the existence of the SAE J3016 standard, it has firmly entered everyday life and spread among representatives of the automotive sector. In this regard, it is imperative to periodically and timely make changes, corrections, and supplement the document to contain up-to-date information and correspond to the actual situation in industries. The current version of the standard provides a more descriptive visualization of SAE automation levels. Neural networks have enormous possibilities in autonomous driving, they can solve the most complex tasks, but they require as input information a set of photos. This information must correspond to specifications such as size and color. The images must be processed so that the neural network can then be able to extract the necessary information. Based on the studied articles, all photos undergo a processing process, such as binarization, formatting, and segmentation. These steps are required to prepare a clear image for detection, so these steps are significant. Often in real life, the neural network interprets the information in an image that can be changed by up to 80% compared to the actual situation acquired through the dashboard.

An eloquent example is rain, or flakes of snow, various areas, and optical errors, all of which must be detected and removed from the original image. Knowledge and use of these digital image processing technologies are the basis of every intelligent scene perception visual system. Suppose the computing power of HW equipment allows using several neural networks, for instance, for detecting cars and traffic lights. In that case, this approach is welcome because it offers a high detection rate. Still, it is worth mentioning that such an approach requires enormous financial resources.

Description of the designed system

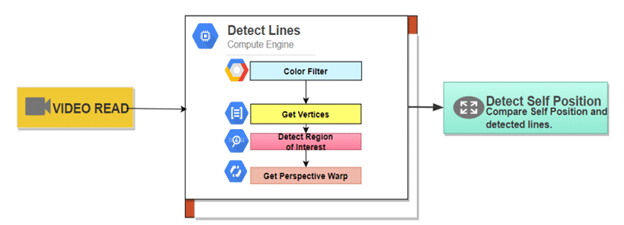

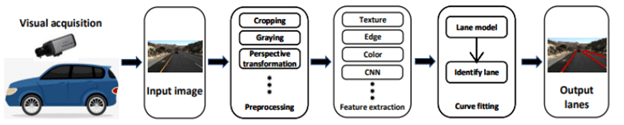

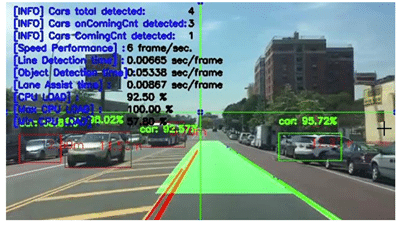

The elaborated project represents an experimental system (Figure 4) in artificial intelligence and machine vision to study modern techniques and technologies for information processing, such as machine vision and neuronal networks. The primary source of information is the video sequence that contains one or more real situations recorded from the car’s dashboard. The developed system aims to process and extract information that would help the driver while driving. As mentioned earlier, the system can only generate some warning signals, but with the possibility of integrating this visual information processing system with other methods such as lidar, ultrasonic sensor, etc.

Lane departure assistance

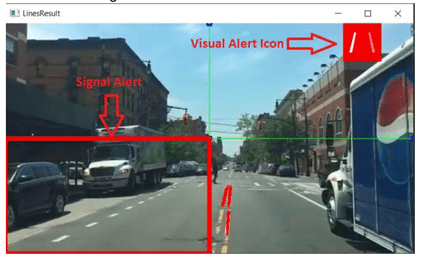

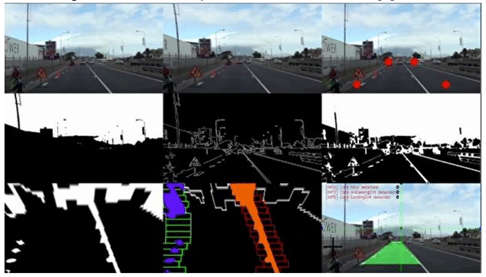

The LDA aims to signal to the driver the situation when the car crosses the lane and exits in the opposite direction (Figure 6). This system can signal to the driver if the vehicle changes lanes without signaling this maneuver, leading to accidents involving one or more road traffic agents. The system must detect the car’s center about the position of the road marking. Because only the visual information purchased through the WEB camera is used, it was proposed to detect the road marking and then the car’s visual center. When these two landmarks, it is possible to check the car’s position in relation to these markings (Figure 5).

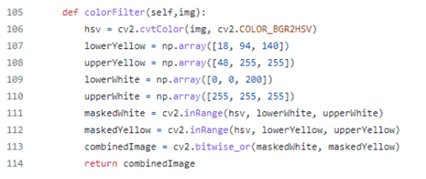

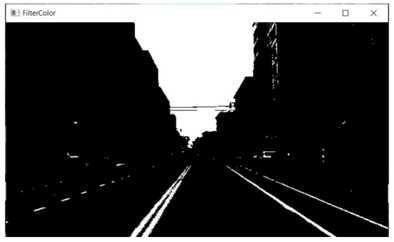

➢ Color Filter – At this stage, the color range is filtered, which is the most used color for marking strips. In our case, it is yellow and white.

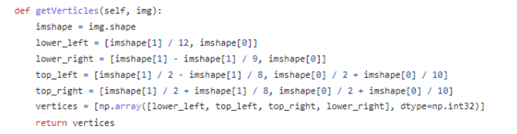

➢ Get Vertices – To find the region of interest, it is expected to find vertices (Figure 10) of this region.

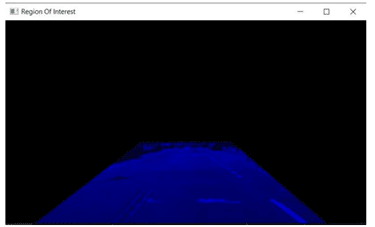

➢ Detect Region of Interest – The region of interest in marking. To increase the efficiency, speed, and quality of processing, it is necessary to establish a region of interest in which the algorithm will look for the lines of the road marking.

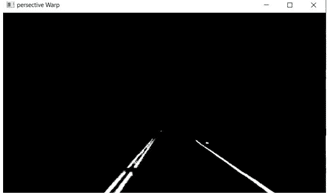

➢ Get Perspective Warp – At this stage, the final image processing procedure takes place before all the detected lines are extracted.

After this step, the final image processing procedure takes place before all the detected lines are extracted. After the lines are detected and stored in the form of matrices, the algorithm can check the landmarks if necessary to generate a warning signal (Figure 7).

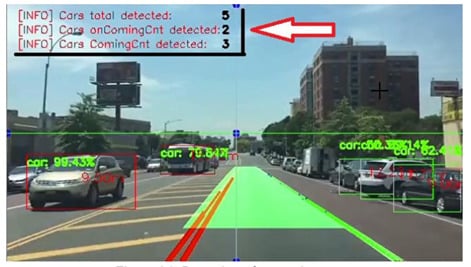

Collision alert assistance

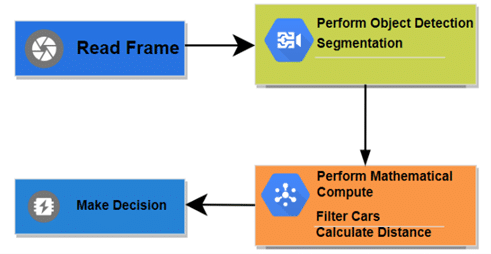

The purpose of the CAS (Collision Alert Assistance) (Figure 13) is to warn the driver of the danger of a collision with other vehicles in road traffic. First, objects must be recognized and detected. The neural network performs this task.

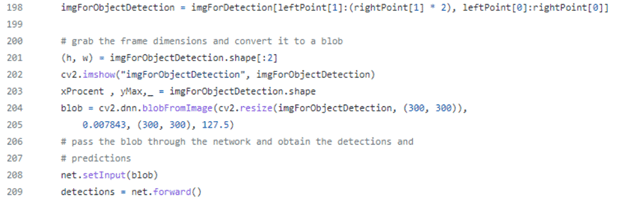

➢ Perform Object Detection – at this step, the acquired frame is processed using software functions (Figure 14) and prepared for neuronal network and further object detection.

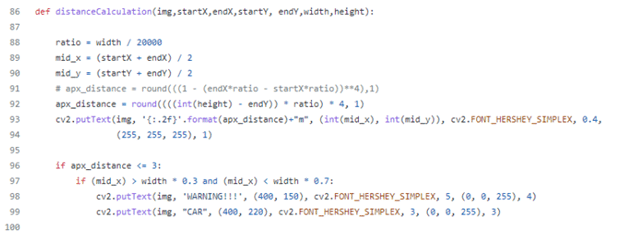

➢ Perform Mathematical Compute of Distance (Figure 15)

After all, the steps are done, the system can alert if the distance between cars is too close using a special icon. (Figure 16).

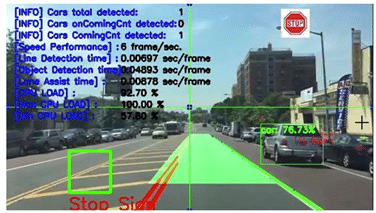

Stop line alert assistance

The traffic signs represent an extremely important source of information about the driver’s expected behavior in relation to other road traffic participants. Another essential feature is Stop Line Alert Assistance. This assistance helps the driver and can alert the driver when one or more stop signs are detected on a traffic road.

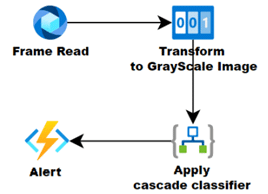

➢ Transform to Grayscale image – this is necessary because cascade classifiers work only with a grayscale image (Figure 18).

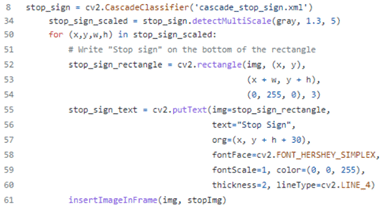

➢ Apply cascade classifier (Figure 19)

➢ To prevent collision if the driver does not consider the stop sign, SLA can alert when a car is in an appropriate area of this sign.

Lane line detection

Lane line detection (Figure 21) is an essential component of self-driving cars. It describes the path and area for self-driving vehicles and minimizes the risk of getting in an ongoing lane. This concept also allows monitoring objects and their position relative to the autonomous car.

Additional use case of information acquired using a designed system

The Adaptive Lighting Systems function detects the headlamp beams of oncoming traffic or the rear lights of vehicles in front. It dims the area of this oncoming car to prevent the blindness of drivers or pedestrians. An onboard camera provides information for this system. These cameras use computer vision and a neuronal network to detect cars and pedestrians. When the object of interest is detected, the computer vision algorithm and the models developed using MATLAB generate information for the headlamp control software. The system designed in this research can detect objects such as cars, pedestrians, and buses and determine their position on the road relative to the autonomous vehicle.

Experimental results

After studying and designing the system, the validation and implementing process encountered many technical and system issues. Exploring the causes of these issues offers the possibility to understand better the aspects of the operation of intelligent vision systems for autonomous driving cars. We can list two significant categories of problems encountered in the process of implementing and validating the system:

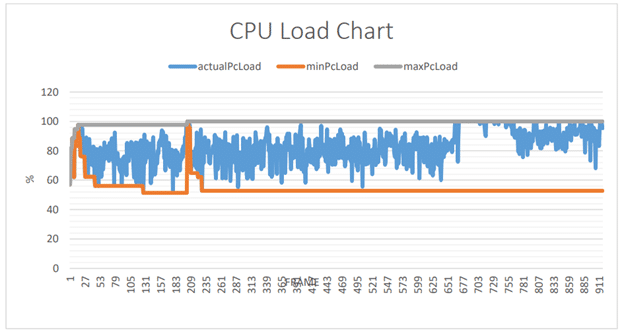

- Hardware limitations – consist of all hardware limitations (Figure 36), which cause the restrictions for exemplary implementation and validation process.

- Intelligent computer vision systems for autonomous driving cars require minimal processing of a large amount of data. As mentioned in previous chapters, an image is a two-dimensional matrix with three levels of depth.

The low detection rate and a high number of erroneous detections (Figure 24), better technologies require a better technical-material basis.

Using only computer vision processing methods does not provide safety detection. Based on these results, it must be stated that using technologies that offer a high detection rate is paramount in systems of this type. Hardware limitations (Figure 25) are the leading cause of the impossibility of conducting a more detailed study of the interaction between neural networks and computer vision algorithms for autonomous driving.

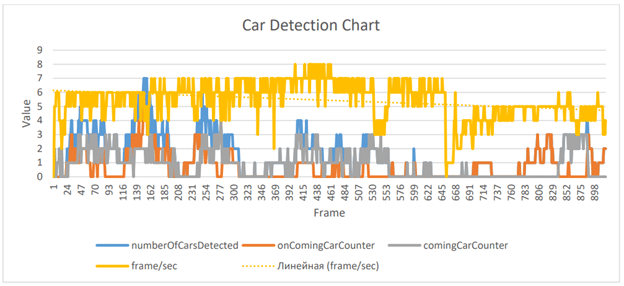

Figure 26 are represented the main performance result of all intelligent assistance for autonomous vehicles. It is observed that the processing speed is very low, only 5-6 frames/second, this speed in real situations is very low because, according to the statistics, the average rate of the machine is 8.3 m/s. This discrepancy between the car’s average speed and the system’s computing power leads to the impossibility of testing the system in some conditions close to reality. This study used the neural networks created on the SSD architecture and trained on the Pascal VOC dataset. The choice was due to the desire to balance between a high detection rate and speed. As a result, a system has been created that offers the possibility of using more intelligent driver assistance. Still, the quality and safety of these systems require using a more affluent technical base and a deeper design of future such systems.

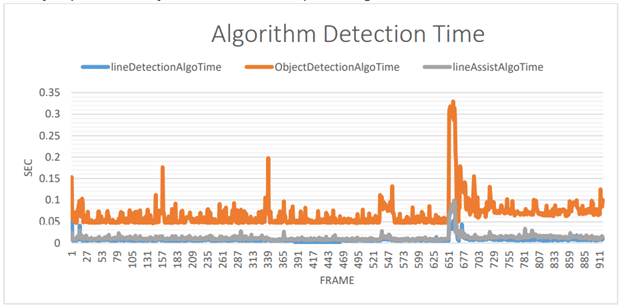

As shown in (Figure 27), information processing by artificial intelligence takes longer than digital image processing techniques used to detect road lines or intelligent assistant systems. At first glance, it seems that neural networks are slower. Still, they can process and generate information in much larger quantities than simple machine vision algorithms. Tests have shown that machine vision algorithms consume enormous CPU resources (Figure 39). Whenever information is needed to extract information, the frame that is stored as an array is processed by the CPU, which requires considerable hardware resources.

Conclusions and future work

Following this dissertation, a broad knowledge base in machine vision and neural networks was acquired experimentally. This information is very useful because it will be used to design and implement more advanced autonomous driving systems. The problems detected in the implementation and design process of the system offer a deeper understanding of future solutions for autonomous driving systems. Most of the goals proposed in this paper have been achieved. As a result, we have a functional experimental system that uses machine vision and neural network techniques to perceive and extract information from scenes and situations in road traffic. The focus was on machine vision, digital image processing technologies, and neural networks, each with its own goal but all linked to a digital image processing and information extraction system so that this information can be processed and based on it to generate decision-making signals. In order to create different autonomous assistants, a wide range of technologies such as cascade classifier, single shot detection, and distance calculation based on positional information of an object in a digital frame were used. The main problem we encountered during the development of this system was the inability to simulate many real situations that can occur in the movement of cars. This fact causes some gaps in the detection and effective assessment of what is happening on the road. In the future, it plans to use simulators to have the opportunity to check how the program behaves in different situations. It was found that a cascade classifier can be used to detect more specific objects, such as stop signs, stop lines, or other types of road markings. This solution is characterized by simplicity of implementation but has a considerable disadvantage compared to neural networks. The Cascade classifier has a low detection rate and, as a result, observed approximately a 12% margin of error. To minimize this margin of error, it is proposed to use the video sequence with higher quality, but this may lead to the need to use computing equipment with very high computing power.

Experiments have shown that using complex neural networks that detect complex objects, such as cars, traffic lights, and people, requires considerable computing power. In this paper, two types of neural networks were used, such as YoloV3 and Mobile SSD. YoloV3 is faster but has a higher error rate, which is very important in the autonomous car because the lives of real people are at stake. For Mobile SSD, the power of the computer (Intel Core I3 7-th generation) was not enough, and NVidia GForce930Mx for performing real-time calculations, the image processing speed was around four frames / s. After writing this research paper and studying, in general, how digital image processing systems work in an autonomous car, it is proposed to deepen each separate topic. For example, the detection of traffic lights remains one of the topics of great interest. The need for more advanced equipment could bring to a higher level the following study in the field of autonomous driving, which would allow the creation of their algorithms and the training of neural networks strictly to the needs of the project. This will allow us to study the more unpredictable situation and find some solutions according to SAE J3016 standard to increase the car’s autonomous level.

We can highlight the main conclusions as follows:

- Understanding the purpose of the system and studying the problem is the first and most important step. Studying the particularity of the situation, the possible variants of actions, and the component actors.

- A clear understanding of the problem causes a better choice of equipment needed to solve it.

- A clear vision of how and why different SW and HW components interact.

- Develop SW requirements and try to take them into

- Account for most situations.

// Let us be the partner that helps your business adapt to change.

Leave us a message for a digital upgrade!

// our recent news

Read our recent blog posts

Blog

AROBS reports consolidated revenue of RON 144 million in Q1 2026 and a 12% increase in net profit

Read More »

Diana-Maria Coste

May 19, 2026